When you send a Bitcoin transaction, it doesn’t go straight to the recipient. It waits in a queue-sometimes for minutes, sometimes for hours-until it gets packed into a block. That block has a limit. And that limit? It’s called block size. It’s not just a technical detail. It’s what decides whether your transaction clears in seconds or gets stuck for days.

What Block Size Actually Means

Block size is the maximum amount of data a single block can hold. Think of it like a truck. Each block is a truck that carries transactions from one point to another. A 1MB truck can only carry so many packages. If too many people are shipping at once, trucks fill up. New trucks come every 10 minutes (in Bitcoin’s case), but if the line is long, you wait.

Bitcoin’s original block size was set at 1MB by Satoshi Nakamoto in 2009. That number wasn’t magic-it was practical. Back then, most people ran nodes on home computers. A 1MB block kept the network lightweight, so anyone with a decent internet connection could participate. But as usage grew, so did the strain. By 2017, Bitcoin was processing only 3 to 7 transactions per second. Visa, by comparison, handles over 1,700 per second.

How Block Size Directly Impacts Speed

Throughput-the number of transactions a blockchain can handle-isn’t just about how fast blocks are made. It’s block size × block time. Double the size? Double the transactions per block. Halve the time? Double again. Simple math, but the consequences aren’t.

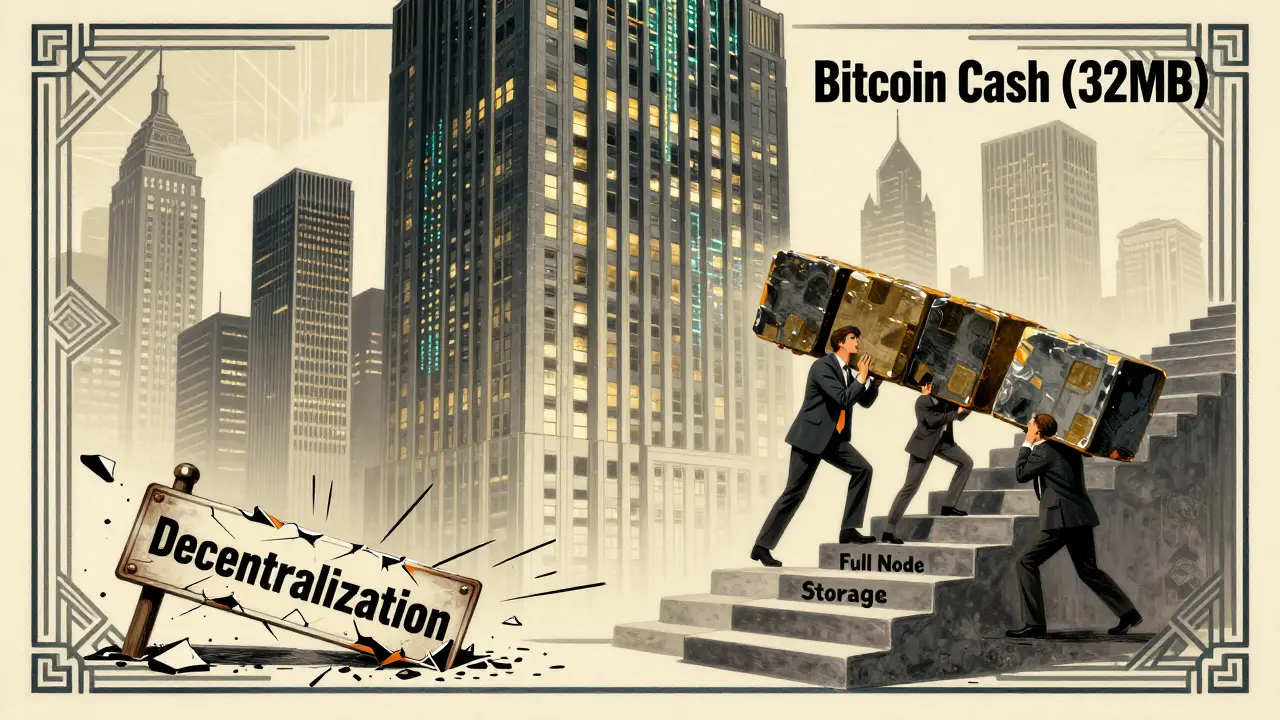

Take Bitcoin Cash. It forked from Bitcoin in 2017 with a 32MB block size. Suddenly, it could handle over 200 transactions per second. No more waiting. No more $50 fees during spikes. That’s why some users flocked to it. But bigger blocks mean bigger demands. Every full node-every computer verifying the chain-must download, store, and validate every single block. A 32MB block every 10 minutes? That’s 4.6GB per day. Most home users can’t handle that. And that’s where the trade-off begins.

The Decentralization Trade-Off

Blockchain’s biggest selling point is decentralization. No bank. No middleman. Just thousands of independent computers agreeing on what’s true. But larger blocks make it harder to run a full node. More storage. More bandwidth. More processing power. The result? Fewer nodes. And fewer nodes mean less decentralization.

By 2024, Bitcoin had roughly 5,000 full nodes. Bitcoin Cash? Around 1,200. Bitcoin SV, which removed block size limits entirely, has fewer than 500. Why? Because running a full node on SV requires enterprise-grade servers. You can’t do it on a Raspberry Pi anymore. And if only big companies or well-funded groups can run nodes, who’s really in control? The answer isn’t pretty.

This isn’t theoretical. In 2021, a major Bitcoin Cash upgrade caused a network split because too many nodes couldn’t keep up. Transactions stalled. Wallets froze. Users lost confidence. Block size isn’t just about speed-it’s about who gets left behind.

How Other Blockchains Handle It

Not all blockchains use fixed block sizes. Ethereum doesn’t. Instead, it uses a gas limit-a cap on how much computational work each block can handle. Right now, Ethereum targets about 15 million gas per block. That’s flexible. Miners can adjust it slightly based on demand. But even then, during NFT drops or DeFi surges, gas fees spike to $100+. Why? Because the limit is still too low for mass adoption.

Then there’s SKALE. In 2025, Dartmouth College tested 12 major blockchains. SKALE hit 397.7 transactions per second with a finality time of just 1.46 seconds. How? It doesn’t rely on one big chain. It runs 19 parallel blockchains, each with its own block size and consensus rules. When one gets busy, traffic flows to another. It’s like having 19 lanes instead of one. Total throughput? Over 7,500 TPS. No one’s talking about block size here-they’re talking about architecture.

Solana? It uses a different trick: high-speed consensus and parallel processing. Its blocks are small, but it produces one every 400 milliseconds. And because it doesn’t require every node to store every transaction, it scales efficiently. It’s not about bigger blocks-it’s about smarter design.

Why Bigger Isn’t Always Better

Some argue: “Just make blocks bigger. Problem solved.” But it’s not that simple. Larger blocks increase the risk of network centralization, slow down block propagation, and make it harder to recover from forks. If a block is too big, it takes longer to travel across the globe. Nodes in Africa or Southeast Asia might still be downloading the last block when the next one is already being mined. That leads to orphaned blocks-wasted work, wasted time.

There’s also the security angle. In Proof-of-Work systems like Bitcoin, miners compete to solve puzzles. Bigger blocks mean more data to verify. That increases the chance of a miner getting left behind. Over time, only the biggest mining pools can keep up. That concentrates power. And concentrated power breaks the promise of blockchain.

And let’s not forget cost. Storing a blockchain with 100GB of data? Easy on a server. Hard on a phone. Hard on a low-end laptop. Hard on someone in a developing country with slow internet. If blockchain becomes a luxury for the rich, it loses its purpose.

The Real Solution: Layer-Two and Beyond

Most experts agree: increasing block size isn’t the long-term fix. It’s a band-aid. The real innovation is happening off-chain.

Lightning Network for Bitcoin? It lets you send thousands of transactions instantly without touching the main chain. You open a payment channel, transact privately, then settle the final balance on-chain once. Same with Ethereum’s rollups. They bundle hundreds of transactions into one, reducing load on the main network.

Sharding? Splitting the chain into smaller pieces so each node only handles a portion. That’s what Ethereum 2.0 is doing. It’s not about making blocks bigger-it’s about making the whole system smarter.

Even SKALE’s multi-chain model isn’t just about size. It’s about distribution. Parallel processing. Scalable architecture. That’s the future.

What’s the Right Balance?

There’s no universal answer. For a store of value like Bitcoin, security and decentralization matter more than speed. For a payment network or gaming platform, speed and low fees are non-negotiable.

Here’s what works:

- If you want maximum decentralization: Keep block size small. Let layer-two solutions handle volume.

- If you need high throughput: Go with flexible limits, sharding, or parallel chains.

- If you’re building something real-world: Combine on-chain security with off-chain speed.

The blockchain that wins isn’t the one with the biggest block. It’s the one that balances speed, cost, security, and access. Anything else is just noise.

What happens if a blockchain’s block size is too small?

If block size is too small, transactions pile up. Fees rise sharply during peak times because users compete to get their transactions confirmed first. Confirmation times stretch from minutes to hours-or even days. This makes the network unusable for everyday payments, gaming, or apps that need fast responses. Bitcoin’s early congestion in 2017 is a textbook example-fees hit over $50, and users abandoned it for alternatives.

Can block size be increased without a hard fork?

No. Changing block size requires a hard fork because it breaks backward compatibility. Nodes running old software won’t accept blocks larger than their limit. That means the network splits: one chain follows the old rule, another follows the new. Bitcoin Cash and Bitcoin SV were born this way. Without community consensus and coordinated upgrade, a block size increase will cause chaos.

Why does Ethereum use gas limits instead of block size?

Ethereum’s blocks aren’t limited by data size-they’re limited by computational work. Each transaction consumes gas, which measures how much processing it needs. A simple transfer uses 21,000 gas. A smart contract might use 1 million. By capping total gas per block (currently ~15 million), Ethereum controls processing load, not data size. This lets it handle complex apps while still keeping block size manageable. It’s more flexible, but harder to predict.

Do larger blocks make blockchains more secure?

Not necessarily. Larger blocks can actually reduce security. They slow down block propagation, increasing the chance of orphaned blocks. That gives miners an incentive to centralize-only big players can handle the bandwidth and storage. Centralization makes the network more vulnerable to attacks. Security comes from distributed validation, not bigger blocks.

Is SKALE’s 7,500 TPS the future of blockchain?

It’s one path forward. SKALE’s model-19 interconnected chains, each with its own block size and consensus-shows that scalability doesn’t require bigger blocks. It requires better architecture. Other chains are following similar paths: sharding (Ethereum), sidechains (Polygon), and rollups (Optimism, Arbitrum). The future isn’t one giant chain. It’s a network of specialized chains working together.

If you’re trying to choose a blockchain for an app, don’t just look at transaction speed. Ask: Who runs the nodes? How much storage does it need? Can everyday users participate? The answer might surprise you.

S F

March 14, 2026 AT 16:03Angelica Stovall

March 16, 2026 AT 09:13Taylor Holloman.

March 18, 2026 AT 09:05john peter

March 20, 2026 AT 02:19Marc Morgan

March 20, 2026 AT 10:07Anastasia Thyroff

March 22, 2026 AT 08:56Kira Dreamland

March 22, 2026 AT 20:36Shreya Baid

March 24, 2026 AT 00:50Christopher Hoar

March 24, 2026 AT 13:03Robert Kunze

March 25, 2026 AT 07:17Sarah Zakareckis

March 26, 2026 AT 04:34Jesse Pals

March 27, 2026 AT 12:12Dionne van Diepenbeek

March 28, 2026 AT 06:23Konakuze Christopher

March 28, 2026 AT 20:59Bryan Roth

March 30, 2026 AT 14:57sai nikhil

March 30, 2026 AT 17:34Sahithi Reddy

April 1, 2026 AT 15:31George Hutchings

April 3, 2026 AT 01:59S F

April 3, 2026 AT 13:52Taylor Holloman.

April 4, 2026 AT 23:08